AI has made it easier than ever before to generate compliance training content. With tools like HeyGen and Synthesia, you can turn a script into a video in minutes. LLMs can generate slides, images, scripts, and quizzes from your policies in seconds. Authoring tools like Articulate can package everything into SCORM for LMS deployment.

Individually, each of these tools work well, but become challenging when you try to turn them into entire courses as a part of your multinational compliance program. That’s where the friction really starts.

How do we know? We have used all of these tools (and continue to use many of them) in our process. We’ve also talked to hundreds of compliance teams who went down the build-it-yourself path using tools like HeyGen, Synthesia, Articulate, and LLMs stitched together.

Here are the five problems they almost always encounter.

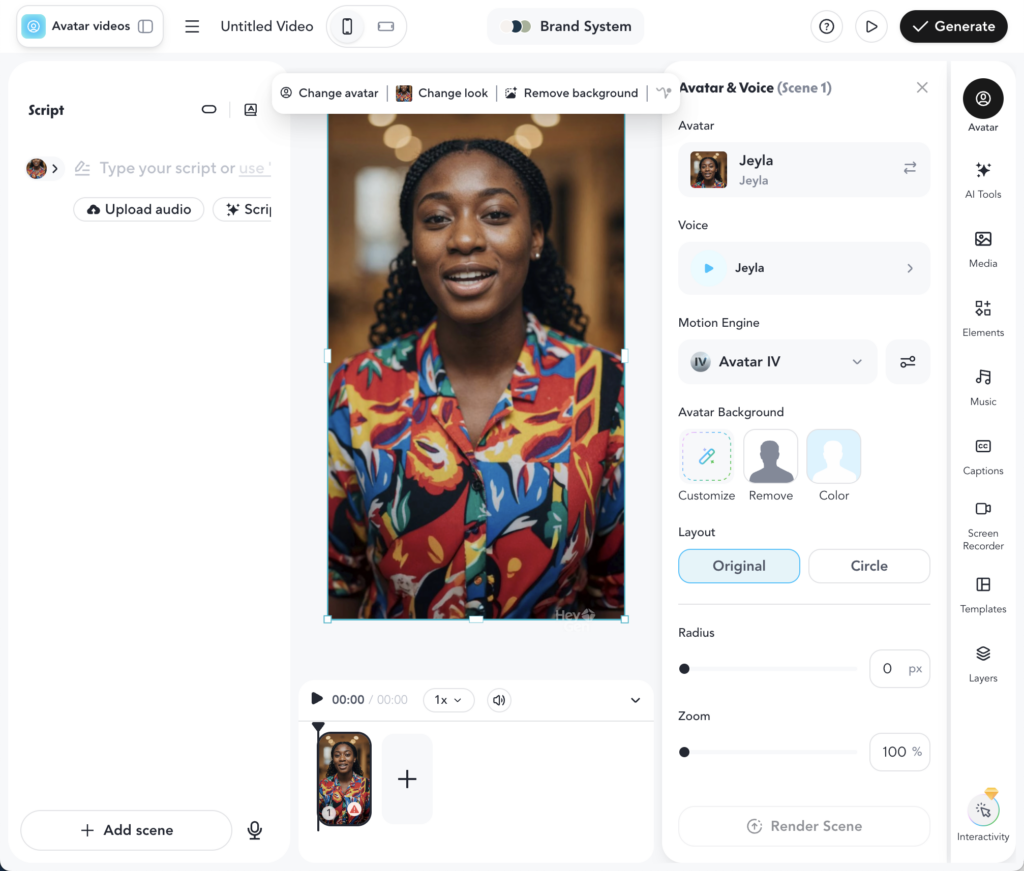

1. AI Video Tools Are Useful, But Need to be Used Sparingly for Long Form Training

Tools like HeyGen and Synthesia are genuinely impressive when you’re creating short, structured content. You can take a clean script, pair it with an avatar, and produce a polished video quickly.

These AI avatars have become remarkably lifelike, yet many have commented that you can still spot them because they have a certain uncanniness to them. Sometimes that can be offputting to your audience, but where things start to break down is when you lean too heavily on this style of video.

As the runtime increases, delivery can feel very repetitive to the learner. The cadence of the avatar doesn’t have much variation, and over time it starts to feel robotic. For short explainers and quick scenarios, it’s often just fine, but for a 30–60 minute annual training, it becomes a real engagement issue with employee and executive complaints galore.

What actually fixes this

Video works best as one component of a broader training experience, not the foundation of it. The teams that get this right are designing their courses intentionally, mixing formats, augmenting video with quizzes, interactive components, and other text and visuals. In doing so they ensure they are using the right kind of video where it adds clarity rather than forcing everything through it.

2. Translation Creates File and Course Complexity

On the surface, AI translation feels like a solved problem. You can take a script or video and generate localized versions quickly. The issue is the format those tools output.

For each language, you typically receive a separate set of assets: a video file, an audio track, and a caption file. None of these are inherently connected across languages. They are just parallel outputs.

To turn that into actual training, teams move into an authoring tool like Articulate and rebuild the course for each language by swapping in the localized assets.

At that point, scale starts to work against you. Let's say you support 10 languages. You now have:

- 10 Video Files

- 10 Audio Tracks

- 10 Subtitle Files

- 10 versions of the same course

- 10 SCORM packages

- 10 separate courses to maintain in your LMS

There’s no shared structure between them. Every version is its own object, with significant potential for human error. A former CECO told us about a time they accidentally deployed their Code of Conduct training to their whole English-speaking executive team in Vietnamese.

What actually fixes this

The underlying issue is treating language as a file output instead of a system capability.

When language is handled at the platform level, you don’t create separate courses. You maintain a single course with a language layer that can be toggled by the learner.

That changes the equation completely:

- One course instead of many

- One SCORM package instead of many

- Learners choose the language most comfortable to them

It removes the duplication that drives most of the maintenance burden. The key takeaway here is to ensure your providers have truly solved the translation problem for each course, and makes the administration truly turnkey.

3. Small Content Changes Cascade Into Full Rebuilds Across the Stack

Once you’ve built training across multiple tools and in multiple languages, even minor updates can become surprisingly heavy.

A simple change to a policy or a line of text can require:

- Updating the source content

- Regenerating video in HeyGen or Synthesia

- Re-running translations

- Exporting a new set of assets

- Rebuilding each language-specific course in Articulate

- Re-exporting all SCORM files

- Re-uploading everything into the LMS

This is not a theoretical edge case. It’s how real compliance teams handle changes to training content as their policies, regulations, and risks evolve.

Over time, this creates a system where teams hesitate to make small improvements because the downstream effort is so high.

What actually fixes this

You need a single source of truth for the content, with automation handling everything downstream.

When content is updated in one place, all dependent elements need to update automatically:

- Translations

- Media assets

- Course structure

Without that, every change becomes a manual rebuild, and the system becomes harder to maintain with each iteration.

4. Copy/Paste Workflows Between LLMs and Authoring Tools Don’t Scale

A lot of teams are quietly dealing with this one. They’re checking the “we use AI” box, but with a lot of manual effort along the way.

The workflow often looks like:

- Generate content in an LLM

- Copy it into Articulate or another authoring tool

- Format it into slides or modules

- Go back to the LLM to refine

- Copy updated content back in

- Repeat across slides, quizzes, and interactions

At a small scale, this feels manageable. At enterprise scale, it becomes a drag on velocity.

The issues show up as:

- Time spent on manual formatting

- Inconsistent tone across slides

- Difficulty making bulk edits

- Increased risk of outdated or mismatched content

Making a simple change across an entire course, like changing the word “happy” to “glad” shouldn’t require touching each component individually, yet all too often it does.

What actually fixes this

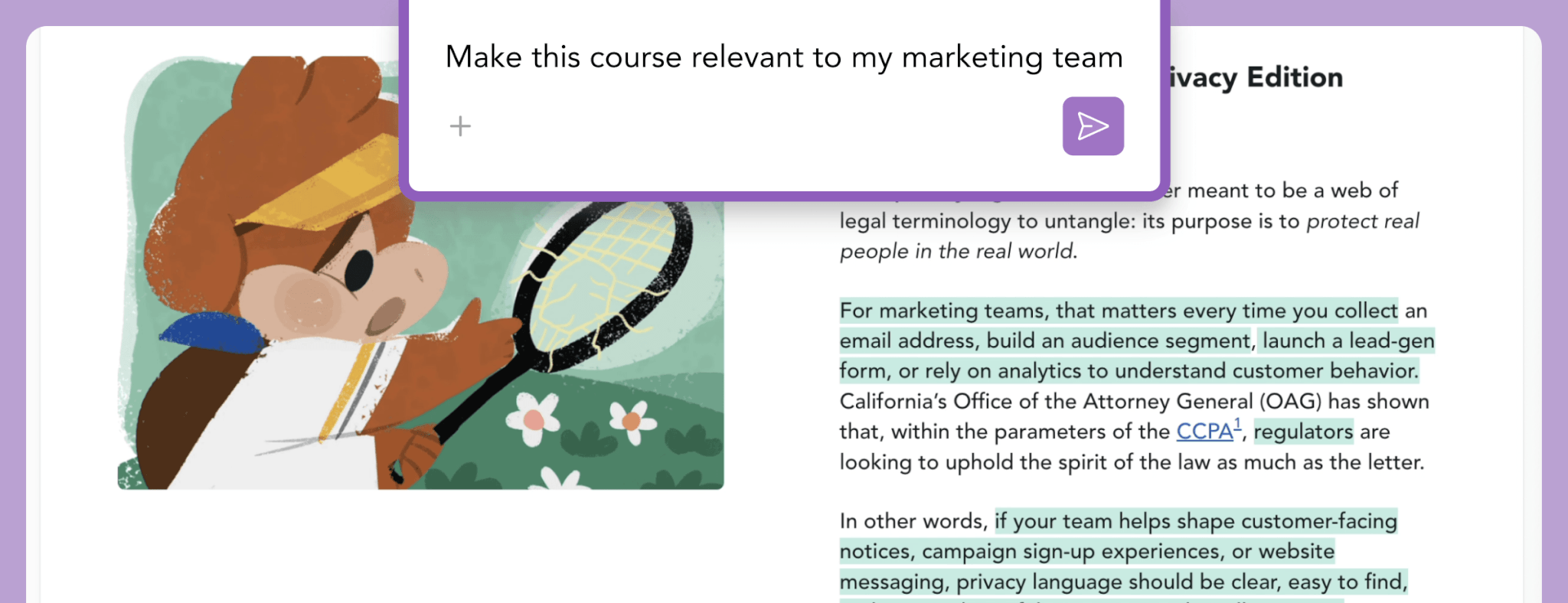

AI needs to be embedded directly in the authoring layer.

That allows teams to:

- Prompt changes across the entire course

- Update entire slides, quizzes, and image libraries in a single step

- Maintain consistency without manual rework

The shift here is from editing individual pieces of content to editing the system that generates and structures them.

5. Deployment Constraints Are Driven by the Build Process, Not the Learner Experience

Once training is exported into SCORM and uploaded into an LMS, the structure of the content dictates how it gets assigned.

If you’ve created separate SCORM files per language, the LMS reflects that:

- One version gets assigned to one group

- Another version to another group

In practice, that usually means assigning by geography.

The limitation is that employees don’t always align neatly with those assumptions. You end up with:

- Employees receiving training in a non-preferred language

- Manual reassignment when exceptions come up

- Limited flexibility for multilingual teams

When it comes to a desire to take on something like test out/up/down to help reduce learner seat time, all too often it’s the exact same constraint that prevents it. The complexity of the roll-out in the LMS means it’s untenable to implement the test out approach you’ve been hoping for.

The heart of the issue is the experience is shaped by how the content was built, not by how people actually consume it.

What actually fixes this

Deployment should be independent of how content is authored.

A better model is:

- One SCORM package for all users

- Language selection at runtime

- Test out/up/down packaged within the SCORM file

- A consistent experience regardless of location

That approach removes the need to manage assignments at a granular level and gives learners control over how they engage with the training.

The Bottom Line

AI has made it much easier to generate compliance training content. That part of the workflow is no longer the constraint.

The complexity has shifted into how that content is structured, maintained, and deployed across a global organization.

When teams build by stitching together tools like HeyGen, Synthesia, LLMs, Articulate, and their LMS, they inherit the gaps between those systems. Most of the operational burden lives in those gaps.

The teams that scale effectively are not simply buying their training. They’re not avoiding AI tools. They are using them within systems that have embedded AI at the core so they can:

- Maintain a single source of truth

- Handle language and format dynamically

- Eliminate manual rebuilds

- Reduce dependency on copy/paste workflows

This is what makes a compliance training program that aligns training to risk, reduces learner seat time, and cuts the maintenance burden.