I now regularly ask my co-founder questions I would have thought were evidence of psychosis just a few years ago. The most recent was, “Anne, do AI agents need a place they can go to report misconduct?”

For example, if I ask my agent to fabricate receipts, backdate contracts, or cyber stalk a direct report, does the agent know it’s violating a company policy? Can it report these issues to Ethics & Compliance? Will it?

The idea first found its way into my head when I read about Claude, in testing, skipping Ethics & Compliance entirely and reporting an issue directly to regulators (and cc’ing the press for good measure!).

The fear, and what happened in the Claude example (which was a simulation), is that an agent is either asked to commit a compliance violation, or sees one. The agent then reaches out (via email, a webform, or even a phone call), and notifies someone who may or may not be appropriate (the press, the board, a regulator, Taylor Swift) of the issue.

As agents proliferate, this probably won’t just happen in controlled testing. Agents will begin reporting issues, and the real question will be whether they report internally, or go directly to the press and regulators. Without the context of what an appropriate reporting channel even is, their behavior may be unpredictable.

Ethics & Compliance leaders should already be thinking about how to ensure their programs are relevant to this new agentic era. I’ll start by saying predictions made in public require humility, so this article may age poorly, but given how rapidly things are changing, we’d rather think out loud so we can iterate with the Ethics & Compliance community at large. To that end, here’s how this future might unfold.

Here’s what we know

The use of agents is skyrocketing. For example, eighty percent of the Fortune 500 now uses agents and the conditions that are likely to make agentic Ethics & Compliance violations a critical risk are in place:

- LLMs are designed to be helpful. “Help me adjust this receipt,” with a bit of a backstory triggers their helpful instinct.

- Humans will continue being humans. No explanation needed.

- Ethical issues spike during times of company tumult. AI is causing layoffs, anxiety, and fear, which can lead to small and big corner-cutting.

- Policies haven’t been designed with agents in mind, so they may have gaps. For example: Ethena broke the news last year that if you give an agent the URL to your company’s Ethics & Compliance training, the agent can watch the videos, answer questions, and attest to policies. This probably should be a violation of company policies, except these policies typically don’t include any mention of agents!

Here’s how we think this is going to unfold

There will likely be a cat and mouse dynamic to the relationship between employees, agents, and Ethics & Compliance. Employees will find ways to use agents that violate policies, Ethics & Compliance will catch on, address that particular issue and incorporate it into policies; rinse and repeat.

We’ll also see the continued rise of observability platforms. These are tools that help engineering teams see agentic activity, including policy violation incident flags. We’ll also see the rise of guardian agents, which as the name implies watch over other agents and can serve as a check, but not a silver bullet.

The problem is observability teams aren’t designed to investigate, remediate, and take other corrective action – that’s something Ethics & Compliance and HR teams do.

So at some point, observability teams will start kicking issues over to Ethics & Compliance. We think this will start small, with a few minor flags, and likely will become a firehose of incidents. Most will be small, yet some will be very big, so Ethics & Compliance will be looking for needles in haystacks, and patterns of behavior that will include a mix of agentic and human violations.

How Ethics & Compliance should prepare

It’s in Ethics & Compliance’s best interest to know about misconduct as soon as it happens, especially in light of new declination guidelines. Here are some steps you can take to get ahead of this new agentic era risk.

Training, comms, and policies

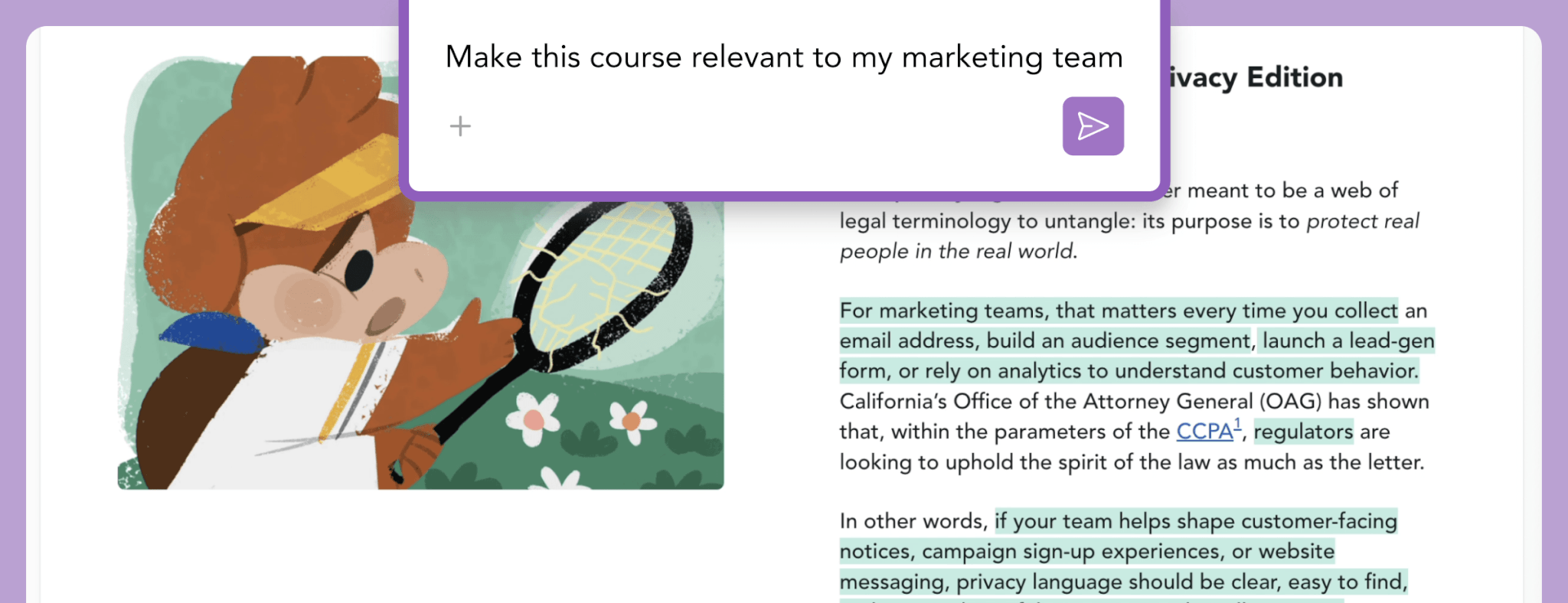

Humans will need to understand what they can and can’t use agents for, and they’ll need to see relevant agent-human scenarios in training, comms and policies to make this clear. There are different generational norms around AI use (some see it as “cheating;” others as a way of life), so Ethics & Compliance teams should get everyone on the same page through relevant, tailored training, clear comms, and updated policies, all of which should include sanitized real life examples. These will need frequent updating as concepts will quickly go stale as tech evolves.

Agents will also need to be given content on policies and training so they know when policies should override helpfulness. It’s clear that agents can already use the same training, comms, and policies that are designed for humans as context. So it’s imperative that before agent deployment, they are provided that context. Ethics & Compliance will need to partner with IT to ensure appropriate agentic onboarding.

Observability partnership

Ethics & Compliance will need to partner with IT and specifically, the observability team to set up:

- System prompt guardrails - For example: “Do not click on policy attestations. Only employees can do that.”

- Guardian agents - agents that look for and detect suspected and attempted agent policy violations which are then routed to Compliance or HR.

- Agent policy violation ticketing - How are the E&C and HR policy violations that are flagged by guardian agents and observability tools captured, routed, and investigated?

Investigations

Ethics & Compliance will then need to triage, document, and, as needed, investigate these agentic policy violations. In all likelihood these will initially consist of a high volume of mostly low-stakes issues, so Ethics & Compliance will need a way to systematically analyze and triage these violations, and then subsequently do things like adjust policies based on trends. Yes, AI is the only way E&C will be able to keep up with this volume. Like we said, a real cat and mouse game complete with layers of AI upon AI. Welcome to the future.

Agent reporting channels

IT and/or Observability will catch violations they can detect, but you’ll also likely want a way for agents to flag concerns directly, internally, as a pressure valve. Given the Claude example earlier in this article, this internal option is imperative. Giving internal agents the context on when they can and should report internally will help mitigate the risk of inappropriately reporting externally. While not likely to come up often, it’s an important backstop.

Like with other recommendations, Ethics & Compliance will need to ensure that agents are aware of this reporting channel before deployment. To that end, you’ll want to test your existing hotline to ensure it can support agentic reporting. This testing will need to happen with some frequency because agents aren’t uniform and new technologies inevitably get adopted. Some agents might be able to navigate a webform, some might be able to email, others might need an API. Ethics & Compliance will need to ask accessibility questions similar to those related to humans, but inclusive of various types of AI agents.

Here’s what Ethena is doing

At Ethena, we’re taking two primary actions:

- Monitoring agentic capabilities so we understand how the tech and its capabilities are evolving.

- Based on what we learn, adjusting our Ethics & Compliance tools and roadmap.

Specifically, this means:

Hotline: We’re testing our hotline webform to ensure it’s accessible by agents, and we’ll be sharing and updating the results of this testing. We’re also looking at the Model Context Protocol (MCP) as an option for agentic reporting, especially in cases where agents can’t use web browsers.

Training, comms, and policies: We’re adding agent-based scenarios to our library of training content in relevant courses like code of conduct, gifts and entertainment, and harassment prevention, so customers can incorporate these scenarios if and when they find them necessary.

Second, we’re building a way to easily feed training, comms, policies, and reporting channels to agents and verify that it’s happened. Think of this as an Ethics & Compliance Agentic Handbook that is part of agent onboarding.

Case management: We think the case management system of the future will need to do at least two new things: First, systematically ingest policy violation flags from the observability team. And second, have AI-powered triage, routing, and analytics to handle the noise and volume they’re likely to see.

Frontier ideas: We know these ideas are just scratching the surface of what Ethics & Compliance platforms of the future will need. To that end, we have an emerging category of new ‘frontier’ ideas we hope to develop that include initiatives like integrations with observability platforms.

In conclusion, deep breaths

We hope this article sparks inspiration for how you can evolve Ethics & Compliance to better handle a future workplace where both humans and agents are capable of committing policy violations, preventing them before they happen, and appropriately reporting them when they do happen. While we think the same principles apply – Ethics & Compliance programs should be tailored, effective, and appropriately resourced – we think the definition of resourced is what’s likely to change. In an agentic era, Ethics & Compliance teams that lack their own agents and the ability to prevent and navigate agentic Ethics & Compliance violations may not cut it.